| name | about | labels |

|---|---|---|

| Bug Report | Use this template for reporting a bug | kind/bug |

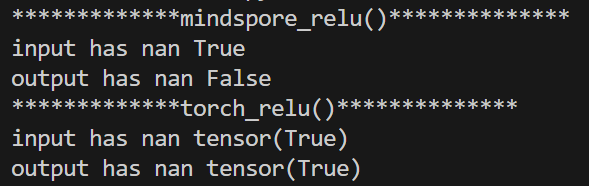

The value of the relu operator on mindspore after passing through nan is 0. And the value of the relu operator on pytorch after passing through nan is still nan.

Ascend/GPU/CPU) / 硬件环境:Please delete the backend not involved / 请删除不涉及的后端:

/device /CPU/

Software Environment / 软件环境 (Mandatory / 必填):

-- MindSpore version (e.g., 1.7.0.Bxxx) :2.2.0

-- Torch version (e.g., 1.7.0.Bxxx) :2.0.0+cpu

-- Python version (e.g., Python 3.7.5) :3.8.16

-- OS platform and distribution (e.g., Linux Ubuntu 16.04):Linux Ubuntu 18.04.6 LTS

Excute Mode / 执行模式 (Mandatory / 必填)(PyNative/Graph):

Please delete the mode not involved / 请删除不涉及的模式:

/mode pynative

import mindspore.ops

import torch

import mindspore

import numpy as np

def mindspore_relu():

print("*************mindspore_relu()**************")

input_np = np.load("./tensor_50.npy")

input_tensor = mindspore.Tensor(input_np)

output = mindspore.ops.relu(input=input_tensor)

is_nan = mindspore.ops.IsNan()

print("input has nan",is_nan(input_tensor).any())

print("output has nan",is_nan(output).any())

def torch_relu():

print("*************torch_relu()**************")

input_np = np.load("./tensor_50.npy")

input_tensor = torch.Tensor(input_np)

output = torch.relu(input_tensor)

print("input has nan",torch.isnan(input_tensor).any())

print("output has nan",torch.isnan(output).any())

if __name__ == "__main__":

mindspore_relu()

torch_relu()

After the relu operator on the mindspore has been computed by nan, the output should still be nan

Please assign maintainer to check this issue.

请为此issue分配处理人。

@fangwenyi @chengxiaoli @Shawny

此处可能存在不合适展示的内容,页面不予展示。您可通过相关编辑功能自查并修改。

如您确认内容无涉及 不当用语 / 纯广告导流 / 暴力 / 低俗色情 / 侵权 / 盗版 / 虚假 / 无价值内容或违法国家有关法律法规的内容,可点击提交进行申诉,我们将尽快为您处理。

感谢您的提问,您可以评论//mindspore-assistant更快获取帮助:

暂未定义该算子输入为Nan时的行为,当前与Torch表现不一致。

会在未来的版本中支持该场景。

登录 后才可以发表评论