代码拉取完成,页面将自动刷新

同步操作将从 JIANGWL/ZhihuSpider 强制同步,此操作会覆盖自 Fork 仓库以来所做的任何修改,且无法恢复!!!

确定后同步将在后台操作,完成时将刷新页面,请耐心等待。

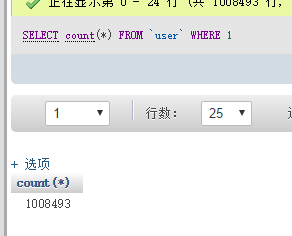

在我的博客里有代码的详细解读:我用python爬了知乎一百万用户的数据

需要用到的包:

beautifulsoup4

html5lib

image

requests

redis

PyMySQL

pip安装所有依赖包:

pip install \

Image \

requests \

beautifulsoup4 \

html5lib \

redis \

PyMySQL

运行环境需要支持中文

测试运行环境python3.5,不保证其他运行环境能完美运行

需要安装mysql和redis

配置config.ini文件,设置好mysql和redis,并且填写你的知乎帐号

向数据库导入init.sql

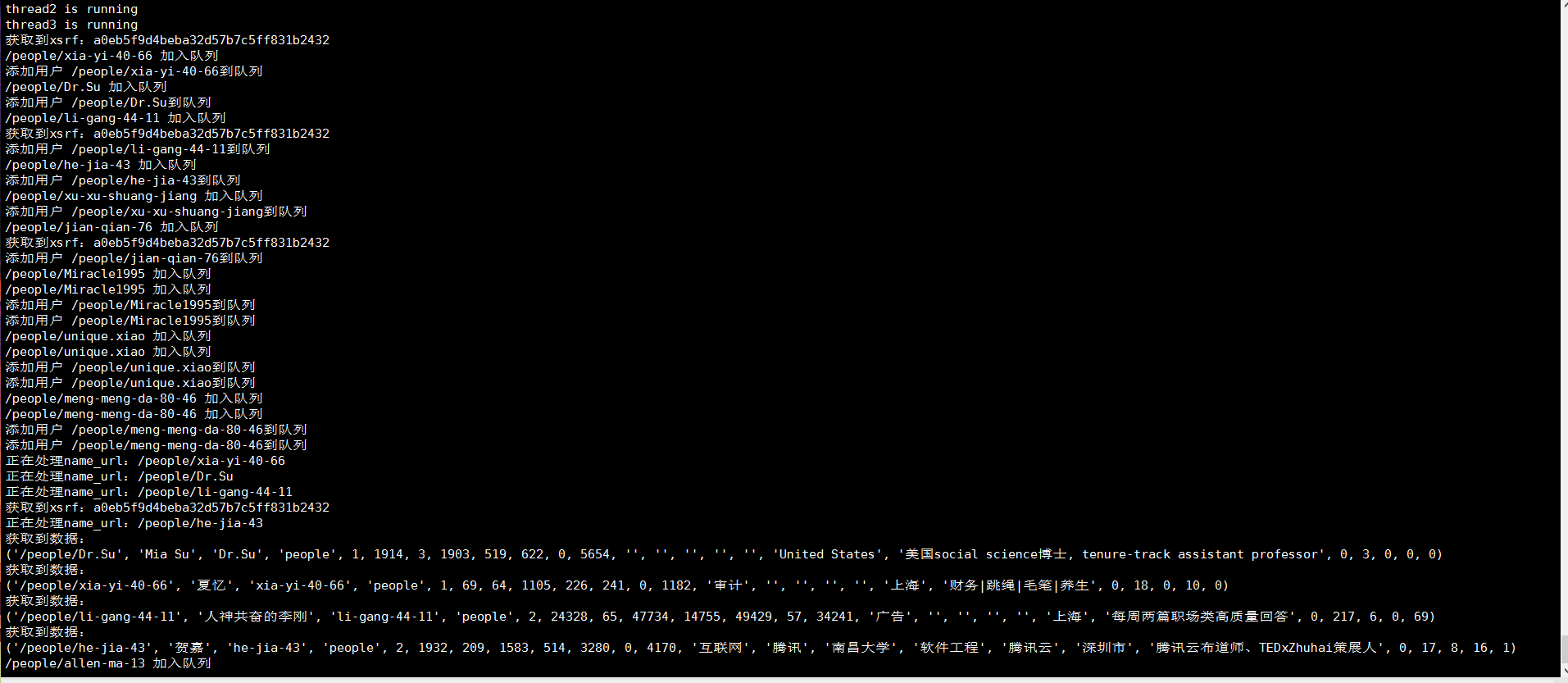

开始抓取数据:python get_user.py

查看抓取数量:python check_redis.py

嫌麻烦的可以参考一下我用docker简单的搭建一个基础环境: mysql和redis都是官方镜像

docker run --name mysql -itd mysql:latest

docker run --name redis -itd mysql:latest

再利用docker-compose运行python镜像,我的python的docker-compose.yml:

python:

container_name: python

build: .

ports:

- "84:80"

external_links:

- memcache:memcache

- mysql:mysql

- redis:redis

volumes:

- /docker_containers/python/www:/var/www/html

tty: true

stdin_open: true

extra_hosts:

- "python:192.168.102.140"

environment:

PYTHONIOENCODING: utf-8

我的Dockerfile:

From kong36088/zhihu-spider:latest

此处可能存在不合适展示的内容,页面不予展示。您可通过相关编辑功能自查并修改。

如您确认内容无涉及 不当用语 / 纯广告导流 / 暴力 / 低俗色情 / 侵权 / 盗版 / 虚假 / 无价值内容或违法国家有关法律法规的内容,可点击提交进行申诉,我们将尽快为您处理。